LASSO is a popular regularization scheme in linear regression in pursuit of sparsity in coefficient vector

that has been widely used. The method can be used in feature selection in that given the regularization parameter,

it first solves the problem and takes indices of estimated coefficients with the largest magnitude as

meaningful features by solving

$$\textrm{min}_{\beta} ~ \frac{1}{2}\|X\beta-y\|_2^2 + \lambda \|\beta\|_1$$

where \(y\) is response in our method.

do.lasso(X, response, ndim = 2, lambda = 1)Arguments

Value

a named Rdimtools S3 object containing

- Y

an \((n\times ndim)\) matrix whose rows are embedded observations.

- featidx

a length-\(ndim\) vector of indices with highest scores.

- projection

a \((p\times ndim)\) whose columns are basis for projection.

- algorithm

name of the algorithm.

References

Tibshirani R (1996). “Regression Shrinkage and Selection via the Lasso.” Journal of the Royal Statistical Society. Series B (Methodological), 58(1), 267–288.

Examples

# \donttest{

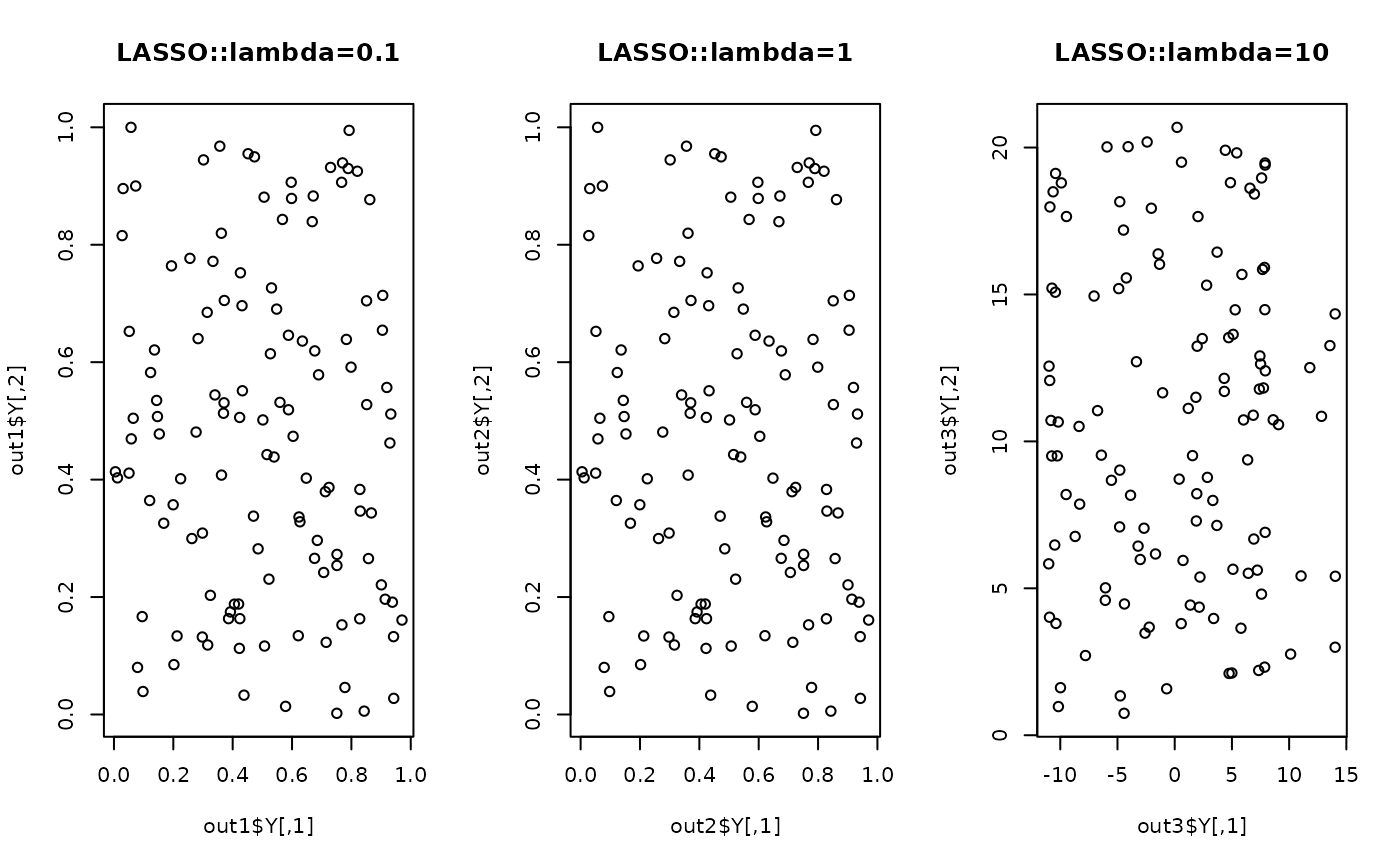

## generate swiss roll with auxiliary dimensions

## it follows reference example from LSIR paper.

set.seed(1)

n = 123

theta = runif(n)

h = runif(n)

t = (1+2*theta)*(3*pi/2)

X = array(0,c(n,10))

X[,1] = t*cos(t)

X[,2] = 21*h

X[,3] = t*sin(t)

X[,4:10] = matrix(runif(7*n), nrow=n)

## corresponding response vector

y = sin(5*pi*theta)+(runif(n)*sqrt(0.1))

## try different regularization parameters

out1 = do.lasso(X, y, lambda=0.1)

out2 = do.lasso(X, y, lambda=1)

out3 = do.lasso(X, y, lambda=10)

## visualize

opar <- par(no.readonly=TRUE)

par(mfrow=c(1,3))

plot(out1$Y, main="LASSO::lambda=0.1")

plot(out2$Y, main="LASSO::lambda=1")

plot(out3$Y, main="LASSO::lambda=10")

par(opar)

# }

par(opar)

# }